IWC Berlin 2025 in too few photos

After many years away, I have returned to in-person IndieWeb events, for IndieWebCamp Berlin 2025!

In past years, I have tried to capture my experience for each day and session in a long-form blog post, with thoughts on sessions, project ideas, progress made, ideas for the future, etc.

I’m pretty tired, though, so instead here is a collection of photos from my phone. It is both too-few and yet too-many!

Saturday

We had a good turnout, and I was impressed with how many folks demo’d their personal sites, in whatever state they were in, and shared their plans and hopes to improve them! ❤️

You can find a recap of the Intros session on the IndieWeb wiki.

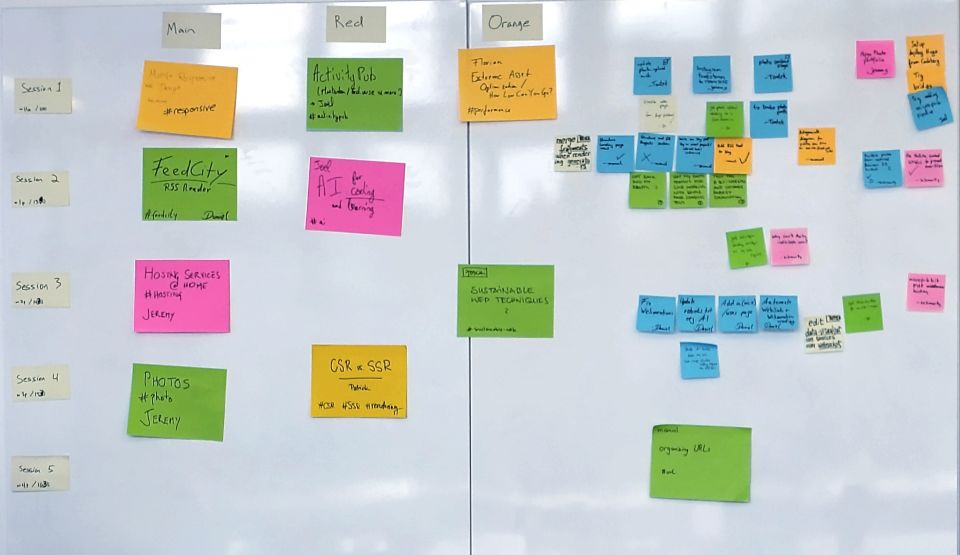

After intros we took a short break for coffee and the restroom, then inscribed the runes and constructed the grid for summoning our schedule for Saturday.

With our futures committed to ink and paper, we had our first short sessions. Then it was time to break for lunch.

Fed and caffeinated, we returned to our sessions.

You can find a list of sessions, each with links to notes (and, eventually, videos) for each, on the IWC Berlin 2025 schedule page.

Before 5pm (1700) we cleaned up and moved out. I was beat, so I headed back to where I’m staying for food, before meeting up with Amy and our friend Jessica, who showed us KPop Demon Hunters. I loved it. 🥹

Sunday

Once caffeinated, we returned to the scene of Saturday’s summoning. We each inscribed small prayers for the day on small paper squares and arranged them next to the scheduling grid as a blessing.

Then everyone hacked on their websites! Until lunch time!

A short couple of hours of hacking later, it was time for Demos. Everyone shared the projects they had tackled, showed their progress, and talked about future work.

After demos it was time to wind it down, clean up, photograph and take down the schedule board, pack up our pins and stickers, and say our goodbyes and see-you-laters.

It’s Over!

It was weird to be back, and it was good to be back. To catch up after a long time away, to continue conversations as if no time had passed at all, and to meet new friends in meatspace.

Thanks to everyone who made this possible! An incomplete list would include:

- Our hosts at Mozilla Berlin

- Organizers Tantek and Joschi.

- Fellow volunteers Jo and Daniel

- Expert remote Zoom wrangler David

- Everyone who attended, whether you were in-person or remote. Thank you for contributing your time and your thoughts!

About those Projects

I had an idea of a couple of “easy” projects, but ended up spending most of my time fixing up some posts with images I broke when I deleted a bucked from Amazon S3, thinking I had already updated those posts. I hadn’t! So, I dug into my backups, re-uploaded, and updated 50-something images across 30 or so posts, mostly from my February 2011 thing-a-day posts.

My first easy project was to fix up some bad markup and styles where YouTube embeds were breaking out of my layout at small screen sizes. This was largely due to my awful old templates and styles, and I ended up manually fixing about a half-dozen posts by hand.

The second “““easy””” project was to try and figure out why I couldn’t sign in to the IndieWeb wiki, using my own IndieAuth server.

It seems like the indielogin.com service that the IndieWeb wiki uses has drifted from the IndieAuth spec, in anticipation of an update to the spec that has not yet materialized.

It’s too much to recap here, but you can find the chat log where I bother Aaron Parecki about it.

A little while later, he told me to “try again”, and…